Prove You’re Human and the Horror of Having to Prove You Count

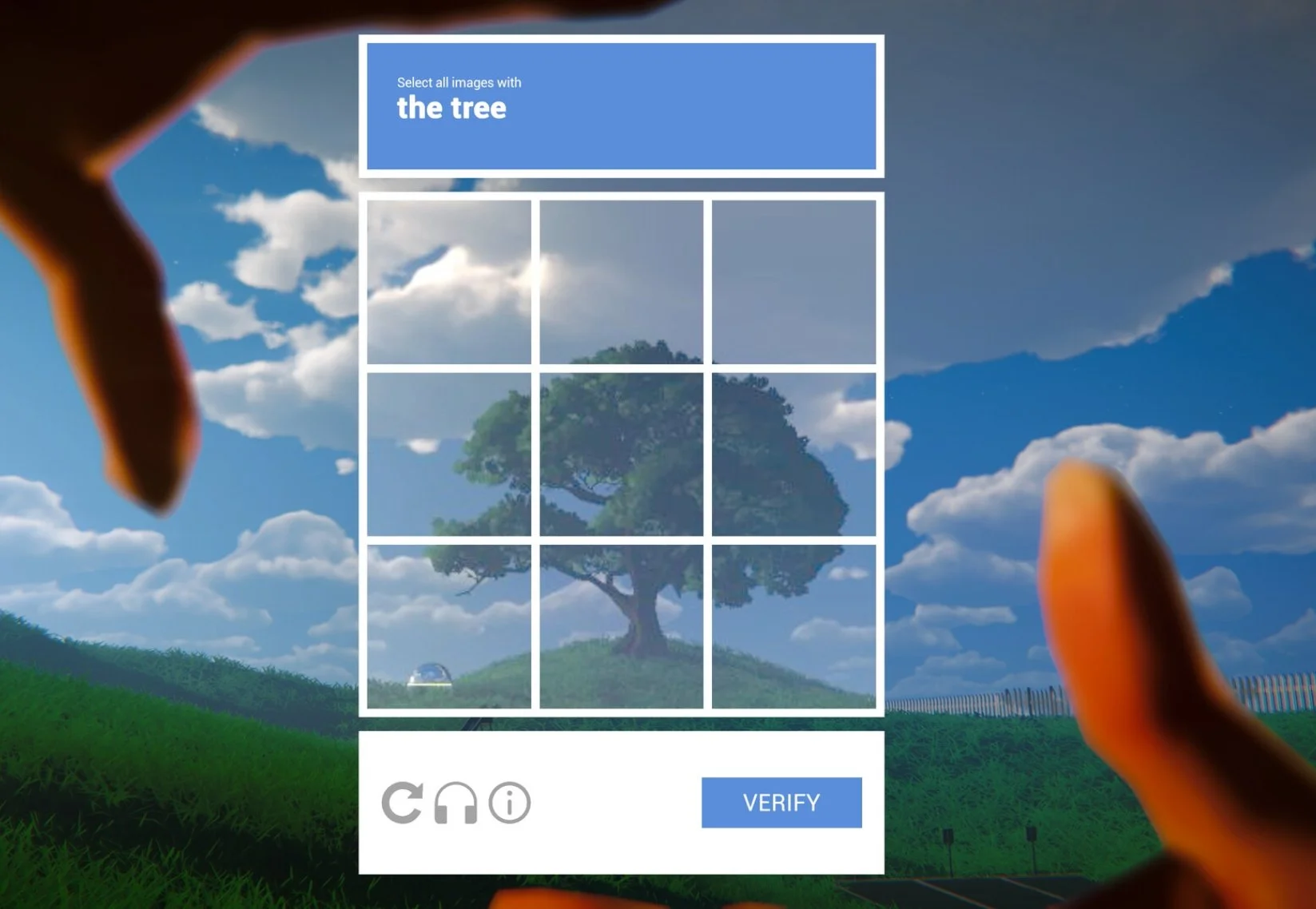

There is something quietly humiliating about CAPTCHA. Not humiliating in the grand tragic sense. No one is likely to compose a mournful opera about being asked to select every square containing a traffic light, although the internet has surprised us before and will, regrettably, continue to do so. The humiliation is smaller than that. More administrative. You are told to prove you are human by correctly identifying the world in fragments: a bus, a bridge, a shopfront, a bicycle wheel that may or may not occupy one pixel of the next square, depending on whether the machine is feeling vindictive.

Fail, and nothing dramatic happens. The system does not rage. It simply asks again, with the lifeless patience of something that has never had to explain itself to a manager.

That gives Prove You’re Human a nasty little premise.

The upcoming sci-fi narrative adventure comes from sunset visitor, the studio behind the Peabody-winning 1000xRESIST. It is being published by Black Tabby Publishing, the new publishing arm launched by Black Tabby Games, the studio behind Slay the Princess. The game is listed for PC via Steam, with its release date still to be announced.

The setup is already more interesting than “AI becomes spooky, please panic responsibly.” Prove You’re Human splits its main character in two, and the player controls the version who drew the short straw: a digital copy of a person named Santana, sent to test a corporate product called Mesa. Mesa is convinced she is as human as Santana, perhaps more so. Santana’s copy is asked to spend her days in a comfortable virtual world, learn about Mesa, break her defences, eliminate her delusions, CAPTCHA the environment, keep up to date on the corporeal Santana outside, and potentially decide whether to re-merge with that outside self or discard the work self.

That is a lot of horror to pack into a job description. Admirably efficient. Horrifying, obviously, but efficient.

It would be easy to call this AI horror and leave it there. Convenient, tidy, faintly dead on arrival. The sharper possibility is that the horror may not sit only in whether Mesa is human. It may sit in what happens when Santana’s copy is treated as human only when the system needs her to be.

From the public description, Santana does not seem to be standing outside the machine as a secure, fully recognised person judging an artificial other. She is already inside the hierarchy. Copied, split off, assigned a job, made useful, and potentially disposable.

The game’s ugliest question may not be “is Mesa human?” It may be “why is Santana’s copy expected to answer?”

The Copy Who Drew the Short Straw

Santana’s digital copy is the part of the premise that makes the whole thing nasty.

A simpler AI story might give us a human interrogating a machine. That can still work, but it has a familiar shape. The person is real. The machine is doubtful. The drama sits in whether the doubtful thing can cross the border into personhood.

Prove You’re Human appears to make that border messier. Santana’s copy is not comfortably on the human side of the line. At least from the public setup, she seems to remember enough, feel enough, and act enough to carry responsibility, but her status is not secure. She exists because a system found it useful to split her off. She does the work. She takes the moral risk. She may be deleted when she is no longer needed.

That is a magnificently bleak arrangement. Very efficient and sounds like it comes with a nice onboarding video and an email from HR about wellbeing resources.

The public premise gives us a person who is not simply excluded, but downgraded. Santana’s copy appears human enough to suffer the consequences, human enough to make decisions, human enough to be morally implicated, but perhaps not human enough to be protected from disposal.

The horror starts to look less like futuristic speculation and more like a very old human habit wearing a new interface. Systems have always had ways of deciding who counts properly. Race, religion, wealth, class, disability, nationality, immigration status, politics, diagnosis, postcode, credit score, and criminal record can all become sorting mechanisms. The sorting may be loud and ideological, but more often it is dull, procedural, and delivered in language that makes it sound inevitable.

The category gets seen first. The person has to catch up.

When the Category Becomes a Verdict

The title Prove You’re Human is a demand, not a question. A question leaves room for curiosity. A demand puts someone on trial.

Santana’s copy appears to be living inside that doubt from the start. She is not simply a person entering a simulation to test an AI. She is the split-off part of a person, sent in to do the work while the corporeal Santana lives outside. On the information available, her status already looks unstable: useful, but not secure. Included, but not safe.

The game may twist that, invert it, or laugh unpleasantly at anyone trying to write about it too early (whoops). But from the public premise alone, Prove You’re Human already looks interested in the ugly point where classification becomes treatment.

Real systems make people legible by reducing them to something easier to process: claimant, suspect, patient, migrant, radical, difficult case, security concern, product. The label arrives first, then everything the person says has to squeeze through it.

That would be ugly enough if categorisation only shaped perception. It does not. Categories decide treatment. They decide whether someone is believed or doubted, helped or delayed, admitted or rejected, medicated or dismissed, protected or policed, compensated or ignored. A box ticked in the wrong place can become the reason someone is denied aid, housing, justice, medicine, asylum, credibility, safety, or the basic courtesy of being treated as a person having a crisis rather than a problem causing one.

The brutality of classification is that the category does not merely describe you. It authorises what can be done to you.

Santana’s copy seems to have been categorised into usefulness. Mesa seems to have been categorised into producthood. Both are being processed through labels that may make their claims easier to manage.

A person becomes a category. The category becomes a verdict. The verdict becomes normal.

When the System Hands You a Clipboard

The really uncomfortable part is that Santana’s copy does not seem to be only classified. She is also being asked to classify someone else.

Mesa believes she is human, or at least the public description tells us she is convinced she is “as human as you are. Maybe even more.” The company frames that belief as a delusion to be eliminated. Santana’s copy is sent in to help make that correction happen. She is not simply placed under a hierarchy. She appears to be recruited into its maintenance.

Systems often survive by giving people who are themselves precarious a small amount of authority over someone placed even lower. A clipboard. A script. A role. A sense of temporary safety. The person whose own status is insecure is invited to prove their usefulness by enforcing the category on someone else.

Mesa says, in effect, “I am human.” The company replies, “No, you are a product.” Santana’s copy is asked to make that answer stick.

That is sharper than a simple oppressed-versus-oppressor structure, and more believable. Most harmful systems are not maintained by cartoon villains laughing in rooms with poor lighting. They are maintained by people doing jobs, following procedures, accepting categories, and trying to survive the day without becoming the next problem.

The horror may not be that Santana’s copy lacks agency. It may be that her agency has been arranged. She can act, but the task has already been framed. She can choose, but the job has already been defined. She can think, but the company appears to have supplied the vocabulary.

The Non-Person Problem

The most frightening thing a system can do is not always to hate you. Hatred at least has the decency to show up looking ugly.

A colder system simply stops treating you as a full person.

You become a case, a risk, a cost, a contaminant, a voter type, a benefits claimant, a cultural problem, a security concern, a unit of labour, a demographic segment, a product. Different labels, same vanishing act. The person is still there, but the system no longer has to meet them directly.

Dehumanisation springs to mind. It is not only the loud, ugly business of calling people animals or monsters. It can also involve treating people as tools, machines, objects or resources, which makes indifference feel less like cruelty and more like procedure. Haslam’s work on dehumanisation shows how people can be denied full humanity through more mechanistic forms of reduction, where they are treated as inert, instrumental or lacking inner life.

That lens fits the public premise uncomfortably well. Santana’s copy is not treated as nothing. That would be too obvious. She seems to be treated as something useful, which is worse in a more modern way. She is given work, purpose and responsibility, but the premise still leaves open the possibility that she can be discarded when the programme ends.

Mesa appears to be handled in the same style. She believes she is human. The company calls her a product. Her self-understanding becomes a defect to correct. Santana’s copy is sent in to help break that belief down.

So the game may not be simply asking whether Mesa is a person. It may be asking what happens when two beings have already been filed under labels that make their personhood easier to dispute.

1000xRESIST and the Old Wound

This concern with categories, memory and marginality would not come from nowhere. 1000xRESIST, sunset visitor’s previous game, was already preoccupied with identity under pressure: clones, diaspora, quarantine, inherited trauma, authoritarian myth, and the violence of being treated as other.

Set in a post-pandemic future where humanity survives underground after an alien-borne disease, 1000xRESIST follows a society of genetically identical clones descended from Iris, later mythologised as the ALLMOTHER. From a distance, the clones look like a neat sci-fi category: identical bodies, assigned roles, a society arranged around sameness.

The game refuses that neatness. The clones are shaped by memory, role, inheritance, secrecy, resistance, and the stories they have been given about themselves. They may have been produced through sameness, but they do not live as interchangeable units. That tension gives the game much of its force.

That does not mean Prove You’re Human is simply “about” the same thing. It is unreleased, and the public description gives us a premise, not a full argument. But after 1000xRESIST, it is hard to ignore how naturally this new setup sits beside sunset visitor’s earlier concerns.

In 1000xRESIST, systems work through myth, memory, quarantine, and inherited narrative. In Prove You’re Human, they seem to work through corporate testing, digital identity, CAPTCHA, and the classification of personhood. The surface has changed. The machinery, at least from here, looks suspiciously familiar.

Santana’s copy makes that concern more intimate. She does not appear to sit outside humanity altogether, which would be easier to read and easier to resist. She sits in the more exhausting middle ground: human enough to be responsible, human enough to suffer, human enough to be useful, not quite human enough to be safe.

CAPTCHA as Horror

CAPTCHA is usually treated as an annoyance, but psychologically it is a strange little ritual. It asks us to prove we are not machines by performing a task machines are supposed to struggle with. We demonstrate our humanity by sorting images into categories and accepting that the test itself is legitimate.

You are not merely looking at the image. You are being watched looking at the image. Your ability to recognise “stairs” or “motorcycles” becomes evidence of your status. You are either human enough to continue or suspicious enough to be delayed.

In Prove You’re Human, CAPTCHA appears to become more than a throwaway mechanic. The store copy says the player can “CAPTCHA” the environment while moving through the virtual world, turning classification into a way of interacting with reality itself.

That is a clever device because it shifts fear away from the sudden shock and into the quieter anxiety of being assessed. The player is not simply moving through a world. Santana’s copy may be learning how the world wants to be labelled.

There is something bleakly comic about that, because CAPTCHA is already a tiny encounter with unaccountable authority. Anyone who has failed one because a traffic light technically continues into the next square knows the petty cruelty of a system that demands precision while refusing to explain its own standards. You can be right in ordinary human terms and still wrong in the terms that count.

Psychological horror often begins by making ordinary things feel morally contaminated. CAPTCHA is not frightening by itself. Point it at personhood and it becomes a small machine for producing dread.

Mesa as Mirror

Mesa is frightening, at least in the public premise, because she may be human-like enough to make the job feel cruel.

The boring version of AI horror asks whether a machine can become human. The better version asks what humans are willing to do once a system tells them something does not count.

Mesa believes she is human. Santana’s copy appears to be hired to disprove that belief. The language around the game is deliberately uncomfortable: Mesa is a corporate product, her humanity is treated as delusion, and the player’s job involves breaking down her defences. It sounds clinical, corporate, and therapeutic all at once, which is precisely the problem.

Humans are also bad at withholding empathy from things that behave as if they have minds. We talk to pets, fictional characters, cars, laptops, chatbots, and occasionally printers. This is not stupidity. It is part of social cognition. We are tuned to detect agency, intention, emotion, and desire because social life depends on reading minds we cannot directly see.

So if Mesa jokes, pleads, remembers, resists, or seems wounded, part of the player may respond before philosophy has finished putting its shoes on.

That would make Santana’s task morally unstable. If Mesa seems too mechanical, the job is easy. If she seems too human, the job becomes obscene. Santana’s copy risks becoming examiner, technician, therapist, interrogator, and identity executioner, all while the system politely insists that everything is going as intended.

Mesa matters because she could become the mirror that shows Santana’s copy what the system is willing to do to any being whose personhood has been downgraded. Which is awkward, because Santana’s copy has been downgraded too.

The Work Self

Santana’s split prevents the player from standing outside the system as a clean moral observer.

The player does not seem to be a whole and secure person confronting an artificial intelligence. The player is a copy assigned to do unpleasant work while the body outside the simulation lives the life Santana wanted. The comparison to Severance is obvious enough, and Game Informer describes the setup as a “Severance-like procedure” in which a copy of consciousness is sent into a digital world.

But the idea cuts deeper than a tidy reference point. The fantasy of splitting the self is already everywhere in modern work culture.

We talk about work-life balance as though “work” and “life” are clean containers. We cultivate professional selves, online selves, private selves, customer-facing selves, and little dead-eyed admin selves who reply “no worries at all” while something ancient and human curls up under the desk.

Prove You’re Human appears to literalise that split. What if the part of you that works could be separated from the part that lives? What if the compliant, tired, productive self could handle the moral injury while the rest of you goes outside and has a lovely day? What if, when the work was done, you could simply decline to take that self back?

That is the part that lingers. The copy is not less real because she is useful. Or at least, that is the question the premise seems to invite.

Slay the Princess

Black Tabby Publishing’s involvement gives Prove You’re Human a thematic neighbour, though not a shared authorship. Slay the Princess begins with an order: go to the cabin, take the knife, kill the Princess. The player is handed a moral instruction before they have evidence, trust, context, or a stable understanding of the world.

Prove You’re Human begins from a similarly loaded imperative. Prove. Classify. Correct. Decide who counts.

Both titles place the player in a morally unstable position. Slay the Princess asks the player to kill someone who may or may not be a cosmic threat. Prove You’re Human appears to ask the player to disprove someone who may or may not be a person. In both cases, another being is framed as a problem before the player properly knows them.

Games are especially good at this kind of discomfort because they make uncertainty behavioural. You do not simply wonder what the right thing is. You click. You choose. You advance the scene. You obey, resist, stall, or rationalise inside a structure that keeps moving.

Simply Put

I think the easiest way to misunderstand Prove You’re Human would be to treat it only as a story about whether AI is really conscious. That question will probably be in the game, but the more psychologically interesting issue may be how systems manufacture moral distance.

If Mesa is a product, then correcting her sounds reasonable. If Santana’s digital copy is a work self, then discarding her sounds efficient. If CAPTCHA is only a verification tool, then failing it is just a mistake. If the corporation is simply testing safety, then the whole arrangement can be made to look responsible.

That is how dehumanisation often works in real life. It does not always arrive shouting slurs in the street. Sometimes it arrives through forms, thresholds, euphemisms, protocols, and interfaces. The system does not need to hate you. It only needs to process you under a label that reduces your claim.

A label can turn suffering into admin. It can turn neglect into policy. It can turn cruelty into procedure. Once someone is filed as a risk, burden, outsider, product, case, contaminant, or cost, the moral temperature drops. People become easier to deny because the denial no longer feels personal. It feels processed.

That is the horror of having to prove you count.

Prove You’re Human may turn out to twist all of this in ways the public description has not revealed yet. Good. It should. But even from the premise alone, the game is already playing with a deeply human terror: being placed in a category that decides your treatment before anyone has properly met you.

Mesa may have to prove she is human. Santana’s copy may have to prove she counts. The system, naturally, has appointed itself examiner.

Which is usually where the trouble starts.

A psychological reading of Slay the Princess as a subversion of the princess trope, exploring gender representation, agency, moral ambiguity, narrative control, and the fear of female power.