The Dark Triad and the Moral Problem of Suddenly Having Something to Lose

In 2021, I completed my MSc in Applied Social and Political Psychology at Keele University with a dissertation on the Dark Triad and moral decision-making. Which is a tidy academic way of saying I spent a long time asking whether people with more psychopathic, Machiavellian, or narcissistic traits are more willing to sacrifice one person to save five, and whether that changes when the sacrifice might inconvenience them personally.

A wholesome little topic, obviously.

The study was called “An Exception to the Rule: Dark Triad and Moral Decision Making: The Effect of Time and Self-Cost on Dark-Triad Personality Traits when Making Moral Judgments.” It looked at how Dark Triad traits relate to utilitarian moral choices, especially under time pressure and when personal cost is added to the dilemma.

That last bit is where things get more interesting. It is one thing to say you would pull the lever in a trolley problem because five lives outweigh one. It is another thing to do it when pulling the lever means you might be fined, prosecuted, blamed, or dragged into the consequences yourself. The “greater good” has a strange habit of becoming less attractive when it arrives with paperwork.

The question behind the study

Moral psychology has a mildly irritating fondness for trolley problems, but they are useful for one reason: they force a decision.

Do you sacrifice one person to save five? Do you act, knowing your action directly causes harm? Or do you refuse, even if your refusal allows more people to die?

These dilemmas are usually framed through two broad moral traditions. A utilitarian choice focuses on outcomes: save the greatest number, minimise the total harm, do the grim maths and try not to look too closely at what the maths involves. A deontological choice focuses on moral rules or duties: some actions are wrong even when they produce better consequences.

Neither position is as clean as people like to pretend. Utilitarianism can sound noble until someone uses “the greater good” to justify something appalling. Deontology can sound principled until refusing to act becomes its own kind of harm. This is why I like moral psychology. It pulls the argument away from “what should a perfectly rational person do?” and toward the messier question: “what do actual people do, and why?”

My study focused on people scoring higher on Dark Triad traits: psychopathy, Machiavellianism, and narcissism. These traits are often associated with lower empathy, greater self-interest, and a more instrumental view of other people. That does not mean everyone with elevated traits is a villain polishing a skull in a candlelit office, but it does mean these traits are useful for studying colder, more self-serving patterns of moral judgement.

The key question was whether people with higher Dark Triad traits would show a stronger preference for utilitarian choices across different conditions. More specifically, would they still make utilitarian choices when forced to decide quickly? And would that preference survive when the decision came with a personal cost?

Why time pressure matters

A major idea behind the study was dual-process theory. In simple terms, moral decisions are often thought to involve two broad systems. One is quicker, more emotional, and more intuitive. The other is slower, more controlled, and more deliberative.

In many moral dilemma studies, time pressure tends to push people toward more deontological responses. When people have less time to think, emotional resistance to harming someone tends to become more influential. Given more time, people may be more willing to weigh outcomes and make the colder utilitarian choice.

That creates an interesting problem when you introduce the Dark Triad. If someone has lower emotional sensitivity to harm, then time pressure may not push them in the same direction. They may not need the same amount of deliberation to override the emotional “do not harm” response, because that response may be weaker to begin with.

That was one of the central ideas in the dissertation. If people high in Dark Triad traits are more likely to make utilitarian moral decisions, does that happen because they are doing more careful moral reasoning, or because the emotional brakes are not biting as hard?

Psychology does enjoy taking a simple question and giving it a basement.

What the study did

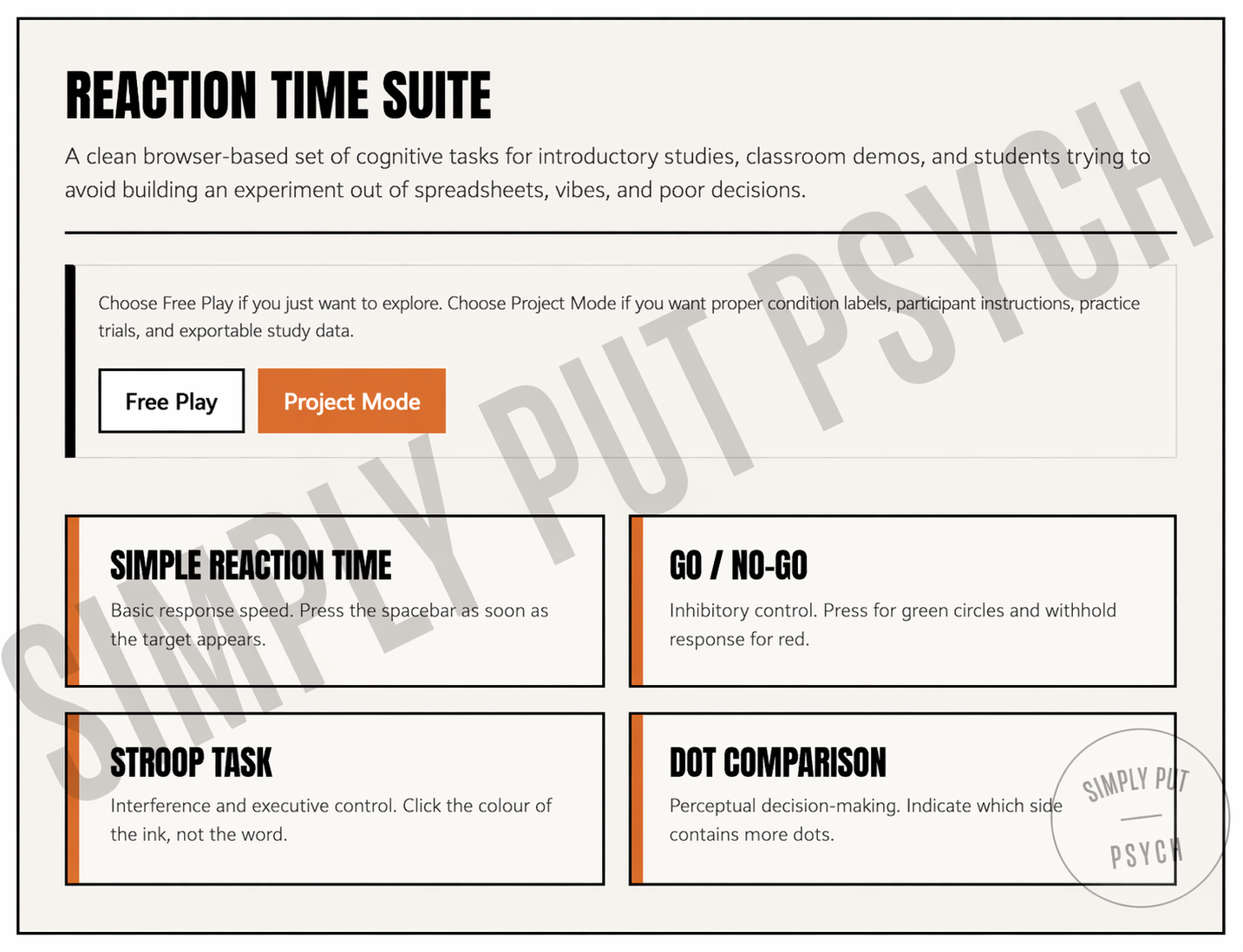

The study used 176 participants after data reduction. Participants completed the Short Dark Triad measure, which assesses psychopathy, Machiavellianism, and narcissism. They were then given 16 sacrificial moral dilemmas.

Some dilemmas were personal, meaning the harm was more direct and emotionally loaded. Others were impersonal, where the harm was more indirect. Half of the dilemmas were also modified to include a self-cost element. So instead of only asking, “Would you sacrifice one person to save five?” the modified versions introduced consequences such as legal punishment or personal loss.

Participants were placed into one of two time conditions. In the time pressure condition, they had 10 seconds to answer. In the contemplation condition, they were asked to think for at least one minute before responding.

The design allowed the study to test whether Dark Triad traits predicted utilitarian choices under fast and slow decision-making conditions, and whether that pattern changed when self-interest entered the scene.

What the study found

The broad finding was that Dark Triad traits did predict a greater preference for utilitarian responses in traditional sacrificial moral dilemmas. That supports previous research suggesting that darker personality traits are associated with a greater willingness to endorse harmful action when it produces a larger outcome benefit.

But the pattern became more interesting when the time conditions were separated.

Under time pressure, psychopathy was the strongest predictor of utilitarian decision-making. That makes psychological sense. Psychopathy is associated with impulsivity, lower emotional responsiveness, and reduced concern about harm. So when the decision had to be made quickly, those higher in psychopathic traits appeared more willing to choose the outcome-focused option.

In the contemplation condition, Machiavellianism became more important. Again, that fits the trait. Machiavellianism is less about impulsive coldness and more about calculation, strategy, and long-game thinking. Given time to consider the dilemma, people higher in Machiavellianism were more likely to favour the utilitarian option.

Narcissism, by comparison, did very little. It was the quiet guest at the Dark Triad dinner party, which is probably the one thing narcissism would least enjoy being.

That absence is interesting in itself. Narcissism often involves social image, status, and self-enhancement. It may not produce the same cold utilitarian pattern as psychopathy or Machiavellianism because the narcissistic concern is often less “what is the most efficient outcome?” and more “how do I appear in this situation?” There is a difference between being calculating and wanting good lighting.

The self-cost problem

The most useful part of the study, at least to me, was the self-cost manipulation.

When the moral dilemmas were altered so that the utilitarian choice carried a personal cost, the Dark Triad’s utilitarian preference was disrupted. In other words, the willingness to sacrifice one person for five became less reliable when the person making the decision had something to lose.

This complicates the lazy reading of utilitarian responses as “more rational” or “more morally advanced.” A utilitarian answer can come from genuine concern for total welfare. It can also come from emotional detachment, self-interest, or a willingness to treat people as pieces on a board. Same answer, very different psychology.

That is the awkward bit. Moral outcomes do not always tell us moral motives.

Someone may choose to save five lives because they care deeply about minimising suffering. Someone else may make the same choice because one stranger dying feels like an acceptable transaction. On paper, they match. Psychologically, they may be nowhere near each other.

The self-cost condition helped expose that difference. When personal consequences appeared, the “greater good” became less compelling for those darker traits. Not always, not perfectly, and not in a cartoonishly simple way, but enough to suggest that utilitarian reasoning among high Dark Triad scorers may be more self-interested than altruistic.

Which, to be fair, is not exactly a revelation that will make humanity look wonderful in the brochure.

Why this matters beyond trolley problems

It would be easy to dismiss sacrificial dilemmas as strange little thought experiments that belong in lecture slides and first-year seminars. Most people are not standing near railway switches deciding whether to redirect death with a lever. If you are, please stop reading and contact the relevant authorities.

But these dilemmas are useful because they exaggerate the structure of real decisions. They strip moral judgement down to consequences, harm, responsibility, and self-interest.

The real world is full of softer versions of the same pattern. A manager deciding whether to protect staff or protect the organisation. A politician deciding whether public harm is acceptable if the polling works. A professional deciding whether to report wrongdoing when doing so could damage their own position. A friend deciding whether honesty is worth the social cost.

The question is rarely, “Would you sacrifice one person to save five?” In normal life, it is more likely to be, “Would you do the right thing if it made your life more difficult?”

That is where the self-cost finding becomes more practically interesting. People can sound principled when the cost is hypothetical, distant, or paid by someone else. Add personal inconvenience and suddenly the moral architecture starts creaking.

What the MSc taught me

I could write the expected reflective paragraph here about academic growth, resilience, and the transformative power of research. There is truth in that, but it sounds like something written under fluorescent lighting by someone trying to satisfy a marking rubric.

The more honest version is this: the dissertation taught me how slippery psychological interpretation can be.

The data gave answers, but not clean ones. Psychopathy mattered more under pressure. Machiavellianism mattered more with time. Narcissism mostly wandered around refusing to pull its statistical weight. Self-cost interfered with the bigger pattern. The effects were meaningful but modest, because humans are unfortunately not lab mice with broadband.

That messiness was probably the best lesson. Research does not hand you a polished moral. It gives you a pattern, then asks you not to overclaim it. It gives you significance, then reminds you that significance is not the same as certainty. It gives you a finding, then quietly points toward six better studies you could design next time.

For someone interested in psychology as it actually applies to people, that was valuable. Not because it produced a neat conclusion, but because it forced a better kind of caution.

The uncomfortable conclusion

The study added support to the idea that Dark Triad traits are associated with utilitarian moral decisions, but with an important caveat. The reason behind a utilitarian choice matters.

Saving five instead of one sounds noble when described abstractly. Yet the same choice can be driven by compassion, calculation, detachment, self-protection, or a complete lack of emotional friction. The moral label does not reveal the motive.

That is the part I still find interesting. We often treat moral decisions as though the answer itself tells us who someone is. But the same answer can come from very different psychological places. A person can choose the “greater good” because they care about people. Another can choose it because people have become numbers. A third may choose it until the cost arrives at their own door, at which point the great machinery of principle mysteriously breaks down.

My MSc dissertation did not solve moral psychology, which is rude of it after all that work. But it did sharpen one idea I still come back to: morality is not only about what people choose. It is also about what their choices cost them, how quickly they decide, and whether their principles survive contact with self-interest.

A moral dilemma is never only about the lever.

Sometimes it is about who has to touch it.

References

Pass, J.-C. (2021). An exception to the rule: Dark Triad and moral decision making: The effect of time and self-cost on Dark-Triad personality traits when making moral judgments [Unpublished master’s dissertation]. Keele University.